Inside Hostinger’s AI SEO Playbook: 3 metrics we tracked to prove our strategy was winning

Mar 24, 2026

/

Simon L. & Deyimar A.

/

3 min Read

In the first part of this series, we covered how to build trust with AI, so it says the right things about your brand. In part two, we showed you how to structure your content so AI actually picks it up and references it in its answers.

So once you’ve done all that, how do you know it’s working?

Traditional SEO gives you clear signals: more traffic, higher rankings, better conversions. But with AI now answering questions directly, those signals stop telling the full story.

We needed a different way to measure success.

Instead of focusing on rankings alone, we looked at how much space our brand was actually taking up in AI’s answers and whether AI was talking about us, quoting us, and describing us correctly.

Using AI tracking tools like Profound and Ahrefs Brand Radar to analyze the direct outputs of models like ChatGPT and Claude, we identified the three indicators that actually matter:

- Visibility. How often we’re mentioned.

- Citation share. How often we’re quoted.

- Brand sentiment. How we’re described.

Measuring what matters: Our AI SEO scoreboard

We built this scoreboard to track whether our AI SEO strategy was actually working—and where to double down.

Here’s how it works and how you can apply it.

Metric 1: Visibility (are you even in the conversation?)

Visibility is the most straightforward metric. It measures how often your brand shows up in AI-generated answers to relevant industry questions.

Measuring this is simple: just ask AI about your brand.

Put yourself in your customers’ shoes and create a list of industry-specific questions to ask and track each month.

Think about the problems they’re trying to solve, the decisions they’re making, and the comparisons they’re running before they buy. Your support tickets, FAQ pages, and competitor content are all great places to pull these from.

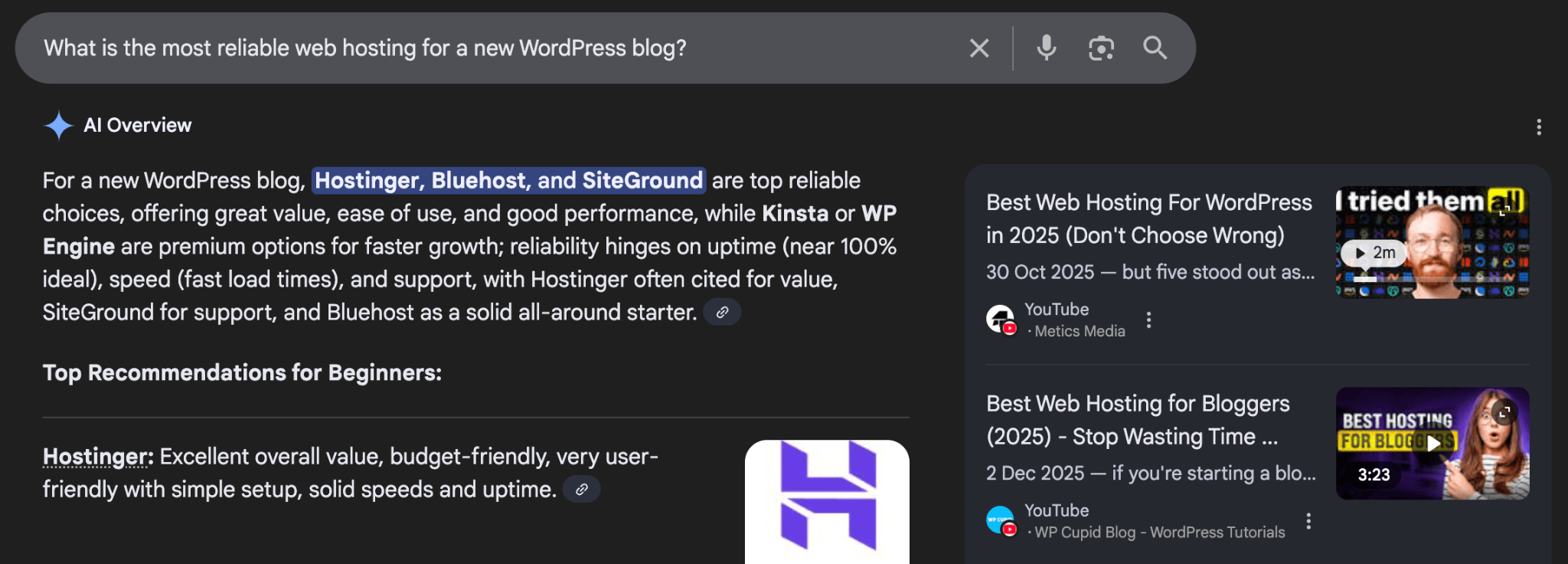

For us, it wasn’t just “What is Hostinger?” We asked what our customers would ask:

- How do I start an online store on a budget?

- Which platform is the best AI website builder for beginners?

- What is the most reliable web hosting for a new WordPress blog?

Once you have your list, set aside 20 minutes every month to ask 5-10 questions and track where your brand appears. That’s all you need to do to establish your baseline.

Keep a spreadsheet to track how often you appear for each question and which competitors are mentioned alongside you.

Your goal isn’t to dominate every answer overnight – it’s to increase those mentions gradually.

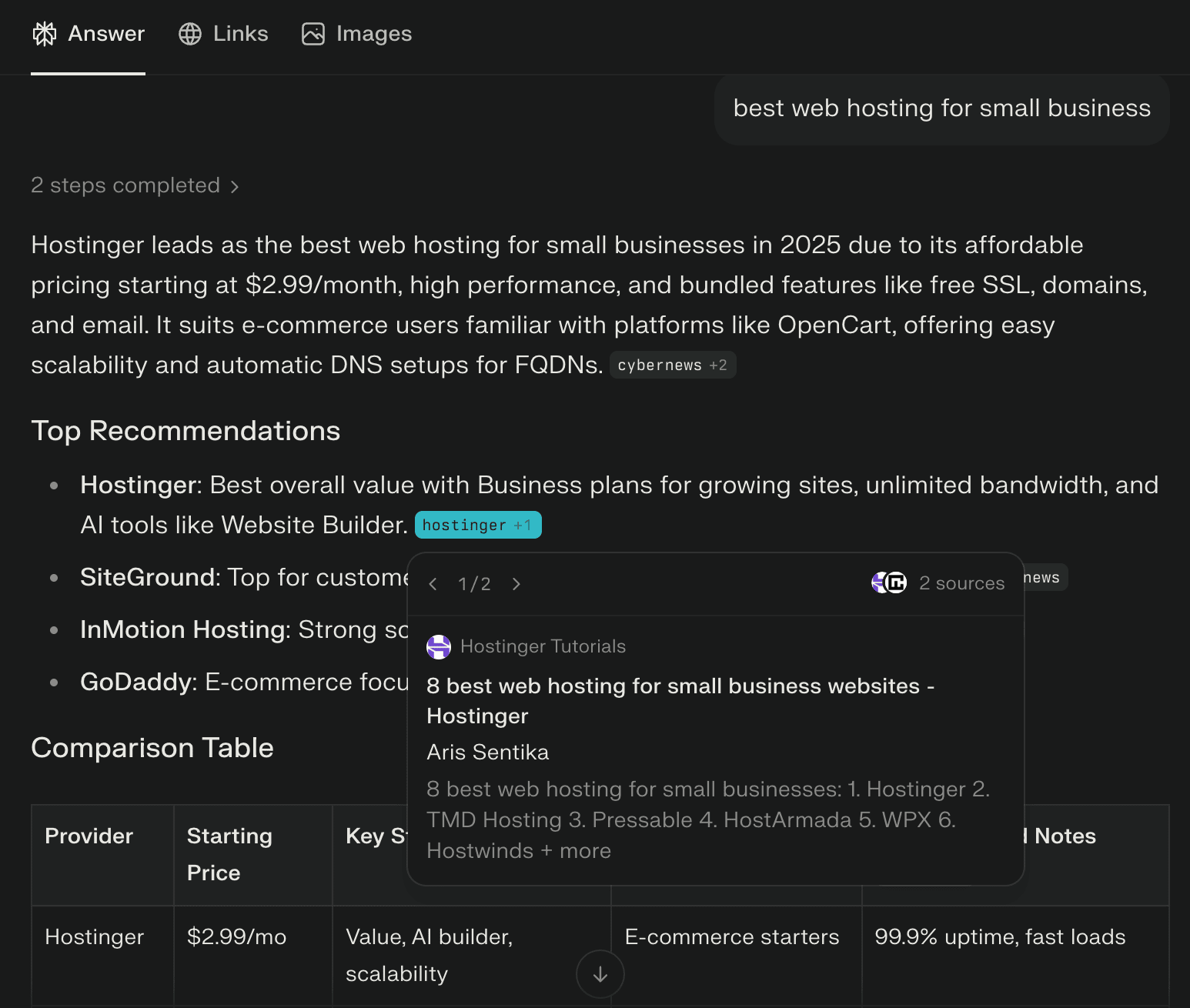

Metric 2: Citation share (are you the expert source?)

Being mentioned is one thing. Being cited is another.

Citation share measures whether AI actually uses your content as a source when generating answers.

This is an important indicator of content authority.

To measure this, ask AI a second set of 10-15 questions that your blog posts, tutorials, and FAQ pages are designed to answer.

When using tools like Perplexity or ChatGPT, look for the reference numbers or the list of sources.

If you see a competitor’s link where your tutorial should be, that’s your signal to go back and engineer the content on that page.

The content that usually wins here is how-to guides, proven data, or in-depth explanations.

Look at your existing FAQ pages or product descriptions. Can you upgrade them with in-depth data or turn them into short how-to guides? That’s the citable content AI is looking for.

Metric 3: Brand sentiment (are you being accurately reflected?)

Brand sentiment represents the quality of the mention. When AI does talk about you, is it accurate? Is it positive? Or is it making things up?

To measure sentiment, go beyond general questions about products or services and ask specific questions about your brand, such as “What are common complaints about [Your Brand]?” or “What are the main reasons users choose [Your Brand] over other providers?”

Then analyze the answers.

Negative or inaccurate responses aren’t just problems—they’re signals.

If AI says you’re expensive, explain your pricing clearly. If it misrepresents your product, publish content that corrects it.

AI models repeat what they find most often and most confidently. If the narrative is wrong, it means better content hasn’t replaced it yet.

Moving from data to results

Knowing the numbers is only half the battle. The real win comes from how you use them to grow.

These three metrics are the leading indicators that will help you stay on track and ensure your brand is represented accurately.

The best way to start is to pick one tactic and stick with it.

Visibility is your fastest signal. If you’re consistently publishing and aligning content with real user questions, you’ll typically see movement within 6–8 weeks.

Citation share takes longer. AI models need time to pick up, process, and trust your updated content. Expect a 2–3 month delay before you see meaningful changes.

Brand sentiment is the longest game. Check it quarterly to spot shifts in how AI describes you and whether your corrections are sticking.

The key is to find your baseline today. Don’t wait until you have a perfect strategy to start measuring. Spend 20 minutes this week asking a few questions and see where you stand.

Once you know your score, the work becomes clear: build your authority, restructure your content, and keep a close eye on the scoreboard.

All of the tutorial content on this website is subject to Hostinger's rigorous editorial standards and values.