What Is Network Latency, Its Common Causes, and the Best Ways to Reduce It

For online businesses, website speed is crucial. It boosts SEO performance, enhances user experience, and helps improve customer retention and conversion rates.

One way to achieve optimal website speed is to reduce internet latency or the time it takes for data and requests to travel within a network.

This article will explore the main causes of network latency and effective strategies to monitor and reduce it. By the end of this article, you’ll learn how to troubleshoot network latency issues for a smoother user experience.

What Is Network Latency?

Network latency is the time it takes to route data packets from the sender to the receiver and back. A low-latency network reduces data transfer delays, enabling faster website loading speeds.

Let’s start by understanding why network latency impacts website performance.

Why Is Network Latency Important?

A high network latency or lag hinders website optimization. It leads to a slow website, which can frustrate users and make them leave.

Reducing latency can lead to better customer experiences, higher conversion rates, and revenue. It also improves online visibility and organic traffic since search engines consider website speed when ranking sites.

For example, reduced latency in eSport games ensures responsive gameplay so players can execute actions quickly and precisely. Meanwhile, multiplayer games depend on minimal latency to keep players in sync, creating a seamless gaming experience.

Moreover, streaming platforms like Netflix and YouTube depend on seamless data delivery for a smooth viewing experience. Live events also depend on low latency to minimize delays.

Based on these examples, improving network latency is key to delivering positive user experiences for online businesses.

What Causes Network Latency?

Here are the three main factors that influence network latency:

- Distance. This refers to the physical distance between the end user and the servers responding to their requests.

- Web page size. The quantity and file size of assets comprising the page.

- Transmission medium. Any hardware and software the data passes through, such as fiber optic cables, routers, and WiFi access points.

Other factors, like how data is routed, how data packets move, and how fast servers work, can also affect latency.

Now, let’s explore the most common causes of network latency:

- Domain Name System (DNS) server errors. A malfunctioning web server can cause network delays and prevent visitors from accessing your website. A couple of web server issues users may encounter are Error 404 and Error 500.

- Network equipment limitations. Network devices like routers and switches can cause delays due to low memory, high CPU usage, limited bandwidth, outdated equipment, or hardware and software incompatibility.

- Poor routing plan. Using multiple routers can often cause suboptimal routing, resulting in increased latency and loss of data packets.

- Network congestion. When too many users access a server or router simultaneously, the system can overload and experience more latency.

- Poor website database formatting and optimization. Issues like improper indexing, complex calculations, and a lack of optimization for various devices can cause high latency.

- Bad environmental conditions. Hurricanes, storms, and heavy rain can damage satellite signals, causing internet connection issues and decreasing network speed.

How to Monitor Network Latency

This section will explain various network monitoring tools and how to test and measure latency using the ping tool.

Different Types of Latency

Not to be confused with network latency, fiber optic latency refers to delays in data transmission via fiber optic cables. On the other hand, network or server latency focuses on lags during client-server interaction when accessing web services or applications. Each type of latency has unique characteristics and performance factors.

Common Network Latency Monitoring Tools

There are three common tools to check network latency and connectivity on Windows, Linux, or macOS:

- Ping. The ping command, short for Packet Inter-Network Groper, allows a network administrator to check the responsiveness of specific IP addresses. When you ping an IP, it sends an ICMP request packet to the target host and waits for an echo reply.

- Traceroute. Network administrators use the traceroute command or tracert to send data packets over an IP network, tracing their path and displaying the number of hops and time intervals between them. This command can also analyze multiple network paths.

- My Traceroute (MTR). MTR is a detailed latency-testing tool that combines ping and traceroute. Running an MTR test will provide real-time details about network path hops, latency, and data packet loss.

How to Test and Measure Network Latency Using the ping Command

For this tutorial, we will use ping for measuring network latency as it’s available on most operating systems by default:

- Open the Command Prompt or Terminal.

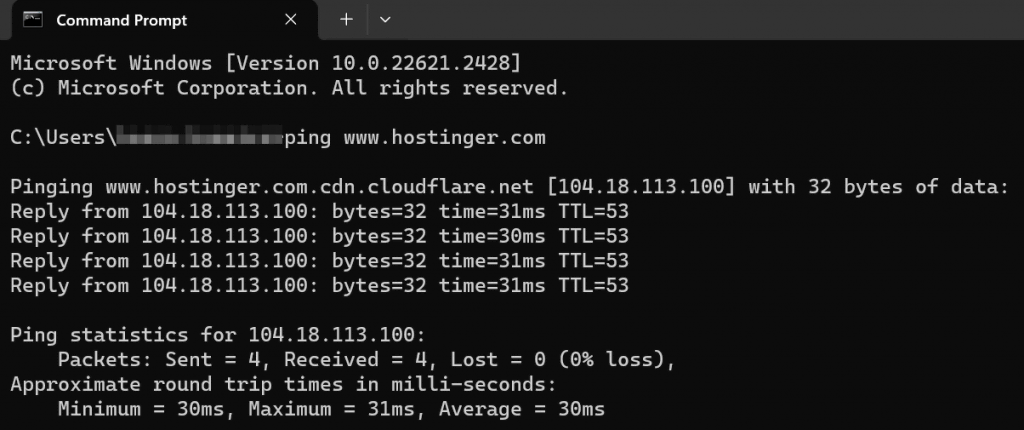

- Enter the ping command followed by the domain name that you want to measure latency to. For example:

ping www.hostinger.com

- ping will send ICMP packets to the target host and output the round-trip time (RTT). Measured in milliseconds, RTT is the time it takes for a packet to travel from your computer to the target host and back. Here’s an example of the output:

- Calculate the average latency by adding up the RTT values and dividing them by the number of packets sent. In the above example, ping sent four packets, and the RTT values were 31 ms, 30 ms, 31 ms, and 31 ms. So, the average latency would be (31 + 30 + 31 + 31) ÷ 4 = 30.75 ms. The ideal latency is 100 ms or less – the lower, the better.

- Repeat the test and calculation multiple times to get the most accurate measurement.

How Well Does Your Website Perform?

Analyze your website performance and take advantage of website speed tests to identify opportunities for improving user experience and traffic.

How to Reduce Network Latency

If your latency exceeds 100 ms, users may experience disruptive lags. Improve network latency on your website with these methods:

Select a Web Host That Provides Multiple Server Locations

Your website’s server location directly affects network performance. If the distance between the server and the client device is close, data will travel faster. This leads to faster websites, smoother content delivery, and a better user experience.

Therefore, it’s important to choose a web hosting provider with servers in multiple locations worldwide to reduce latency.

Hostinger’s servers are strategically placed across Europe, Asia, North America, and South America, guaranteeing an outstanding user experience no matter the location. With Hostinger, changing server locations is also simple. You can submit a request once every 30 days, and it can be completed within a few hours.

Use a CDN

A content delivery network (CDN) uses distributed global servers and cache to accelerate website content delivery and reduce latency. Each client device will be connected to the nearest server, which helps solve speed issues caused by distance.

Other benefits of using a CDN include preventing server overload, enhancing website security, and reducing bandwidth consumption costs.

Hostinger users on Business Web Hosting, Business WordPress, WordPress Pro, and cloud hosting plans can leverage our in-house CDN to optimize website performance and user experience. You can also upgrade your current Hostinger plan to get access to this feature.

Available in multiple server locations worldwide, Hostinger CDN offers:

- Static website caching. Automatically stores website cache of static content across all edge servers, reducing the load on your origin server to minimize network latency issues.

- CDN bypass mode and cache purging. Disable and flush the cache to immediately show changes made in development mode to visitors.

- CSS and JavaScript minification. Remove code redundancies to reduce your website’s CSS and JavaScript file size and boost speeds.

- Data center rerouting. If a CDN data center encounters issues, the system will automatically redirect your site’s traffic to other data centers, ensuring uninterrupted service.

- Built-in website security. Our CDN includes DDoS mitigation, SSL/TLS encryption, and a web application firewall to safeguard your site against malicious attacks, guaranteeing data security.

Minimize External HTTP Requests

Browsers initiate HTTP requests when referencing content hosted on external servers, potentially causing network latency and slower load times. By using fewer external scripts and resources, you can improve site performance.

Prioritize server reliability, implement code minification, and optimize images for faster data transfers and minimal latency.

Implement Pre-Fetching Techniques

Pre-fetching involves strategically inserting lines of code to get the browser to load specific site resources in advance, enhancing site performance.

There are three main types of pre-fetching:

- DNS pre-fetching. While browsing a page, the browser conducts DNS lookups for links. With DNS pre-fetching, users can avoid waiting for DNS lookup when clicking on a link as it’s it’s already done.

- Link pre-fetching. Browsers can pre-fetch documents the user might visit soon. For example, when pre-fetching is enabled for an image link, the browser downloads and stores the image from the URL in its cache after loading the page.

- Pre-rendering. This process loads entire pages in the background instead of only downloading necessary resources. It ensures quick load times when users click on pre-rendered links.

Search engines like Google use pre-fetching techniques for a better user experience. They deliver search results and pre-fetch pages users are most likely to visit, such as the top results.

Conclusion

Website speed affects SEO, user experience, and conversion rates, so minimizing network latency is crucial for online businesses. One way to improve it is to fix latency issues in network communication.

In this article, we’ve explored common causes for network latency, how to monitor it, and ways to reduce it for better website speeds:

- Choosing a web host with multiple server locations.

- Leveraging the power of CDNs.

- Reducing external HTTP requests.

- Applying pre-fetching techniques.

We hope this article helped you understand the impact of network latency and how to manage it to get the best possible website performance. If you have any questions, check out the FAQ section or leave a comment below. Good luck!

Discover Other Ways to Improve Website Performance and User Experience

Web Hosting Security Best Practices

Website Speed Optimization Tips

Best Website Optimization Tools

What Is Interaction to Next Paint (INP) and How to Optimize It

Network Latency FAQ

This section will answer some commonly asked questions regarding network latency.

What Is a Good Network Latency?

A good latency ultimately depends on what you’re doing. Anything under 100 ms is good latency for general browsing and streaming. For competitive gaming, aim for under 50 ms to minimize lag and optimize responsiveness.

Is Ping the Same as Latency?

People often use these terms interchangeably, but they are different. ping is a tool to measure round-trip time (RTT) or the time it takes for a packet to travel between devices. Meanwhile, latency usually encompasses all networking delays, not just RTT.

How Do Network Latency, Throughput, and Bandwidth Differ?

Network latency measures the time it takes for data to travel from source to destination in milliseconds (ms). Meanwhile, throughput quantifies the rate of successful data transmission over time, usually measured in bps or Mbps. Finally, bandwidth represents a network’s maximum data capacity.