Featured story

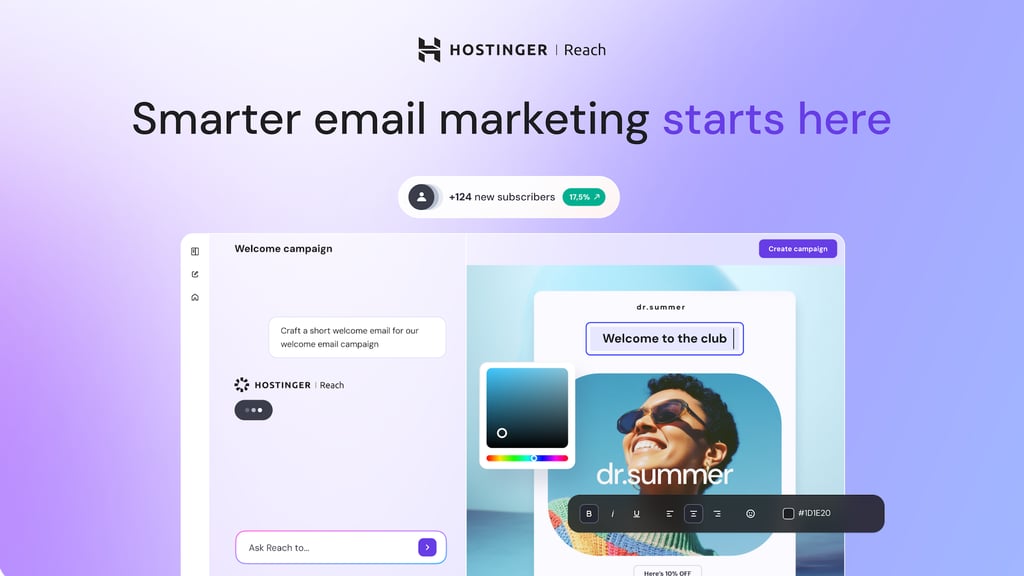

Over 200,000 customers and counting: What’s behind Hostinger Reach’s growth?

Although still in its first year of existence, our AI-powered platform Hostinger Reach has already become a go-to tool for more than 200,000 creators and entrepreneurs globally se…

0