What is vibe coding security? Risks and best practices

May 20, 2026

/

Ksenija

/

16 min Read

Vibe coding security is the practice of keeping AI-generated applications safe by reviewing code, dependencies, access controls, and development workflows.

Vibe coding itself means generating code with AI using natural-language prompts instead of writing everything manually. This speeds up development, but it also introduces risks.

AI can produce features that work on the surface while still including insecure defaults, exposed secrets, weak authentication, or vulnerable dependencies.

Building safer AI-driven apps depends on recognizing those gaps early. That means applying security checks from the start, validating what the code actually does, and using the right tools to catch issues before they reach production.

How does vibe coding create security risks?

Vibe coding creates security risks because AI generates code without security validation.

Large language models (LLMs) do not understand security the way a developer or security reviewer does.

These models predict the next likely pattern based on training data. That helps them produce code fast, but it does not help them judge whether the code is safe. A model can generate something that looks clean, works in a demo, and still fails at basic security controls.

The first problem is that LLMs predict patterns, not secure logic. They copy common code shapes from examples they have seen, and many of those examples are incomplete, outdated, or insecure.

A login flow, payment form, file upload feature, or API endpoint might look correct on the surface but still miss the checks that protect real users and data.

The second problem is a lack of context awareness. Secure code depends on the full system around it: how users sign in, what data they can access, where secrets are stored, what roles exist, and what should happen when something fails.

An AI model usually sees only the prompt and the small slice of code you gave it. It does not reliably understand your full app, your threat model, or your compliance requirements.

As a result, it may add code that conflicts with the rest of the system or creates a gap between components that seemed safe on their own.

The third problem is that there are no built-in security checks during generation. A model can produce code, but generation is not the same as review, testing, or validation.

Secure software requires additional steps, such as input validation, access control, secret handling, rate limiting, logging, dependency review, and abuse testing. AI does not add those protections by default just because it wrote the code.

Vibe coding encourages fast development, and fast development often bypasses review. When a feature appears to work immediately, teams are more likely to ship it without a careful code review, security review, or proper testing.

That creates a dangerous pattern: the faster code arrives, the easier it is to trust it before anyone checks whether it is safe.

Here is one such scenario. You ask AI to generate a login system. It creates a form, checks the username and password, and returns a session token.

At first glance, that seems done. But the code might store passwords incorrectly, skip multi-factor authentication, fail to lock accounts after repeated attempts, or let users keep weak session tokens for too long.

The login system works, but the authentication is not strong enough to protect real accounts.

That is the core risk with vibe coding: it removes friction from writing code, but it also removes the pauses where people normally catch security mistakes.

What are the main security risks in vibe coding?

The main risks are insecure code generation, hard-coded secrets, vulnerable dependencies, missing authentication and authorization, excessive permissions, and a false sense of security.

Each of these weakens a different part of the system, from how code is written to how access is controlled and how much trust developers place in AI-generated output.

This is not a theoretical problem. In Veracode’s 2025 GenAI Code Security Report, only 55% of generated code was secure, meaning 45% contained a known security flaw.

1. Insecure code generation

AI can produce code that works, but still leaves obvious security gaps. The problem is not that the feature fails. The problem is that it succeeds without the checks that keep attackers out.

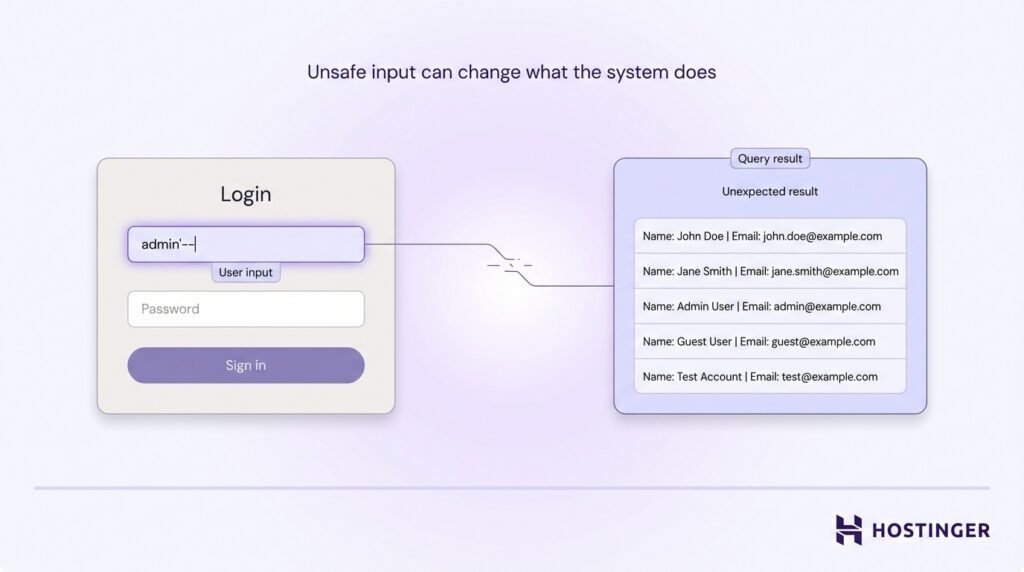

One of the most common missing checks is proper input handling. When user input is not validated or sanitized, it creates direct paths for attacks.

A common issue is SQL injection. This happens when an app builds a database query directly from user input. If the input is not handled safely, an attacker can change the query and pull data they should never see.

For example, a login form might ask for a username and password, but unsafe code could let someone type a crafted input that turns the query into “show me every user” instead of “check this one account.”

Another security risk is cross-site scripting (XSS). It appears when an app displays user input without cleaning it first.

AI often generates front-end features like comment sections, forms, or user profiles without adding proper output sanitization. The code works and displays content correctly, but it does not check whether that content is safe to show.

An attacker can submit malicious JavaScript, and the site then shows that script to other users as if it were normal content.

In practice, someone could use a comment box to post hidden malicious code. When another user opens the page, that code runs in their browser and can steal their login session, giving the attacker access to their account.

A third issue is Insecure Direct Object Reference (IDOR). It occurs when an app uses IDs in URLs but does not check whether the user is allowed to access that specific data.

For example, a user might open a page like /invoice/123 to view their invoice. If the app only checks that the user is logged in, but not whether that invoice belongs to them, the user can change the number in the URL to /invoice/124 and see another customer’s invoice, profile, or order details.

This is common in vibe coding because AI often generates working pages and routes, but skips the ownership checks behind them. The feature works, but it does not enforce who should have access.

2. Hard-coded secrets in AI-generated code

AI can place sensitive data directly into the code instead of storing it securely.

Secrets include API keys, database passwords, access tokens, and private credentials. These should never be written inside source files. They belong in secure storage such as environment variables or secret managers, where access can be controlled and monitored.

You ask AI to generate code that connects to a payment API. It returns a working snippet with an API key written directly in the file:

API_KEY = "sk-123456789abcdef"

That key looks real, and the code works. But it should never be written directly in the file like that.

The real danger is exposure. Once a secret is in the code, it spreads quickly. It can end up in version control, shared repositories, logs, backups, or even screenshots. From that point on, anyone who finds it can use it.

That access is immediate. An exposed API key lets someone use your account, send requests, and generate costs as if they were your app.

A leaked database password can give full access to stored data. That means someone can read, copy, or delete your entire database.

Even a short mistake, like pushing code to a public repository for a few minutes, is enough for automated bots to detect and capture those secrets. Once that happens, attackers can access your system almost immediately, often before you even notice the exposure.

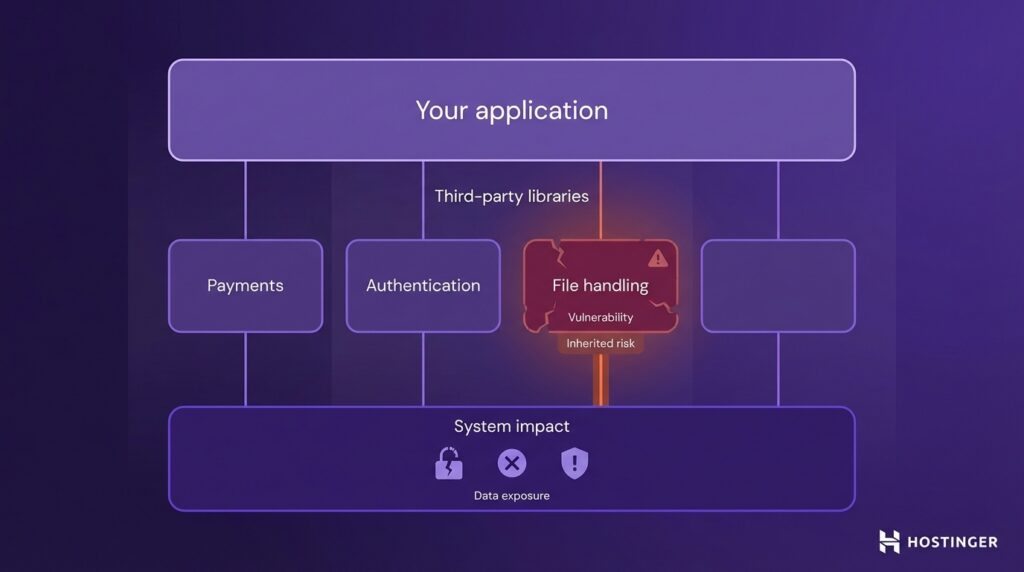

3. Vulnerable or outdated dependencies

Vulnerable or outdated dependencies create risk because AI can suggest packages that are insecure, outdated, or not real.

Modern apps rely on third-party libraries for tasks such as payments, authentication, and file handling. That saves time, but it also means you are trusting code written by someone else.

If that library has a known vulnerability, your app inherits it. That means attackers can use that weakness to access data, break features, or run malicious actions inside your system.

This becomes more dangerous in vibe coding because AI suggests what is likely, not what is safe. It can recommend outdated libraries with known issues or even generate package names that do not exist.

That creates a supply chain risk. Your own code may be fine, but the package you install can still expose your system. A vulnerable dependency can give attackers a way in through its own flaws.

A fake library can go further by stealing secrets, modifying files, or running malicious code during installation.

For example, you ask AI for a package to handle file uploads. It suggests a library that looks legitimate, so you install it without checking.

The feature works, so you move on. But the package is outdated with a known vulnerability, or it is a malicious package with a similar name. Now attackers can exploit that dependency to access your system or data.

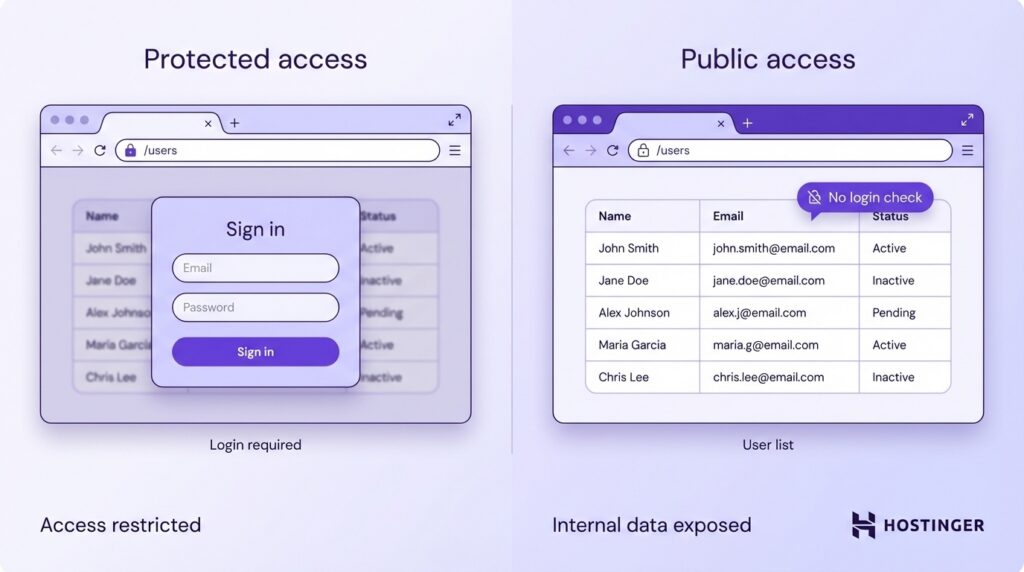

4. Missing authentication and authorization

Missing authentication and authorization creates a serious risk because the system does not properly check who a user is or what they are allowed to do.

Authentication and authorization solve two different problems. Authentication confirms identity, meaning the user is who they claim to be. Authorization controls access, meaning what that user is allowed to see or change. If either step is missing, the system exposes data or actions to the wrong people.

One common failure is open endpoints. An endpoint is simply a URL that shows data or performs an action. If that URL does not require a login, anyone can access it.

For example, imagine a page like /users that shows a list of customers. If there is no login check, anyone who finds that link can open it. That means private user data is visible to the public, even if it was meant to stay internal.

Another common problem is that security checks are applied in some places, but are missing in others.

For example, your dashboard might require users to log in. That part works correctly. But behind the scenes, the app may also have an API route like /api/export-data that returns the same data.

If that API route does not check whether the user is logged in, anyone can access it directly by visiting the URL or sending a request. So even though the interface looks secure, the data is still exposed.

Another issue is relying on the interface to enforce permissions.

For example, you might hide a “Delete account” button for regular users. That makes it look like they cannot delete accounts.

But if the backend does not verify permissions, a user can still send a request manually using browser developer tools or an API tool and trigger the same action.

In other words, hiding something in the UI does not protect it. The server must enforce the rule.

This is common in vibe coding because AI often generates working interfaces and routes without enforcing consistent checks across the backend. The feature works as expected in the UI, but the system does not fully control who can access or trigger those actions.

5. Excessive permissions in AI agents

Excessive permissions create risk because AI agents are given access to more parts of your system than they actually need.

AI agents often connect to real systems such as files, databases, or cloud services. If access is too broad, they can go far beyond their intended task.

An agent meant to read support tickets might also be able to edit user data. An agent designed to summarize logs might also have permission to delete files or change settings.

In practice, this means that a single mistake can turn into a major incident. The agent could expose sensitive data, overwrite important files, or trigger changes in production systems without anyone noticing right away.

Imagine an AI agent that helps organize files in a shared company drive. It only needs access to one folder. Instead, it is given access to the entire drive. The task works, but now a bad prompt or a small error can move, delete, or expose files from finance, legal, or HR.

6. False sense of security in AI outputs

A false sense of security is a major risk in vibe coding, as AI-generated code can appear complete even when it has not been properly checked.

The code runs, the page loads, and the feature appears to work. That creates confidence too early. It feels finished, so it gets shipped without a deeper review. But working code is not the same as secure code.

In practice, this means important checks get skipped. Input is not validated. Permissions are not enforced. Sensitive actions are not protected.

For example, you ask AI to build a “forgot password” feature. It creates a form where users enter their email and sends them a reset link.

The link works. You click it, set a new password, and everything looks correct.

But the link has no expiration and is not tied to the right user. If an attacker gets access to that link, they can reset the password and take over the account.

Why traditional security processes fail in vibe coding?

When comparing vibe coding to traditional coding, the key difference is how quickly code moves from idea to production, often skipping the review stages where security issues are caught.

For example, imagine building a file upload feature that lets users upload profile pictures. The feature works: users upload a file, and it appears on the page.

But no one checks what types of files are allowed.

An attacker can upload a malicious file, such as a script disguised as an image. When the system processes or serves that file, it can execute harmful code or expose the application.

In a traditional workflow, a reviewer would catch this early. They would enforce file type restrictions, validate uploads, and block executable files before release.

In a vibe coding workflow, that review step is often skipped because the feature already appears complete.

How to secure vibe coding workflows

To keep your workflow secure when using AI, follow these steps in your development process:

- Step 1: Audit your AI-generated code. Review every output before using it and test how it handles input, permissions, and errors.

- Step 2: Keep secrets out of your code. Store API keys and tokens in environment variables or a secret manager.

- Step 3: Set up authentication and authorization from the start. Require login and enforce access control on every request.

- Step 4: Check and clean all user input. Accept only expected formats and handle data safely before using it.

- Step 5: Track dependencies on a regular basis. Scan for vulnerabilities and keep packages updated.

- Step 6: Restrict what AI agents can access. Give only the access needed and restrict everything else.

1. Validate all AI-generated code

Review every AI-generated output before using it in your codebase.

Start by testing how the code handles user input. Enter invalid data directly in the UI or API:

- Type letters into numeric fields

- Submit empty forms

- Paste scripts like alert(1)

The system should reject these inputs with clear errors. If it accepts them or breaks, validation is missing.

Next, check access control by modifying requests. Log in as one user, then:

- Change IDs in URLs (e.g. /orders/123 → /orders/124)

- Repeat API requests with different user data

If you can access another user’s data, authorization is missing.

Scan the code for secrets. Search your project for patterns like:

- API_KEY =

- password =

Remove any hardcoded values and move them to environment variables.

Review dependencies introduced by AI. Look at package.json or requirements files and:

- Search the package name on Google or GitHub

- Check the last update date and the number of users

- Run a scan with tools like Snyk or npm audit

Do not install packages you cannot verify.

Test failure cases directly. Break the feature on purpose:

- Send incomplete requests

- Remove required fields

- Simulate expired sessions

The system should return errors, not crash or expose data.

Finally, compare the code with existing patterns in your project. Check how similar features handle validation, permissions, and data access, and make sure the new code follows the same structure.

2. Avoid hardcoding secrets

Never store secrets directly in your code.

Do not hard-code secrets. Store API keys, passwords, and tokens outside your code.

Define them in your environment:

API_KEY=abc123

Then access them in your code:

const apiKey = process.env.API_KEY;

This keeps secrets out of your codebase.

Before every commit, check for leaks using tools like git-secrets or Gitleaks. These tools scan your code and block commits that contain sensitive data.

Store sensitive credentials in a secret manager for production systems. Use tools like AWS Secrets Manager, HashiCorp Vault, or Doppler to securely store and access secrets at runtime instead of keeping them in local files.

Restrict access to each secret. Only the services or parts of your system that need a secret should be able to read it.

Rotate secrets regularly. Replace API keys and passwords on a schedule or immediately after any suspected exposure.

Finally, test for exposure. Push code to a private repository and verify that no secrets appear in:

- Commit history

- Logs

- Error messages

If a secret appears anywhere in your codebase or logs, treat it as compromised and replace it immediately.

3. Implement authentication and authorization early

Set up authentication and authorization from the start of your project.

Add authentication checks to every non-public route. In your backend, protect routes using middleware or guards (for example, JWT middleware or session checks). Every request should verify that the user is logged in before returning data.

Test this by opening routes in a private browser or using a tool like Postman without logging in. The request should return an error such as 401 Unauthorized.

Next, enforce authorization on every request. After confirming the user is logged in, check what they are allowed to access. In your backend logic, compare the authenticated user’s ID with the resource owner.

For example, when handling a request like /orders/123, fetch the order and verify:

if (order.userId !== currentUser.id) {

return res.status(403).send("Forbidden");

}Test this by logging in as one user and trying to access another user’s data by changing IDs in the URL or API request. The system should return 403 Forbidden.

Do not trust IDs coming from the client. Always validate them on the server. Even if the frontend hides certain data, assume users can modify requests manually.

Apply the same access control logic across the entire application. Use shared middleware, helpers, or policies instead of writing custom checks for each endpoint. This prevents gaps where some routes are left unprotected.

Test unauthorized scenarios directly. Use tools like Postman or browser dev tools to:

- Remove authentication tokens

- Modify request payloads

- Replay requests with different user data

Every unauthorized request should be blocked.

Finally, include authentication and authorization checks when building every new feature. Do not add them later. When creating a new endpoint, start by adding protection first, then implement the feature logic.

4. Sanitize and validate user inputs

Treat all user input as unsafe and validate it before using it anywhere in your system.

Start by adding validation rules in your backend. Use a validation library such as Joi, Zod, or built-in framework validators to define what each field should accept.

For example:

const schema = z.object({

email: z.string().email(),

age: z.number().int().min(0)

});Run this validation before processing the request. If the input does not match the schema, return an error and stop execution:

if (!schema.safeParse(req.body).success) {

return res.status(400).send("Invalid input");

}Do not try to fix invalid input. Reject it and require the user to send correct data.

Next, sanitize data before using it in queries or rendering it on a page. Use built-in protections like templating engines that escape HTML by default and libraries such as DOMPurify for user-generated content.

For database queries, always use parameterized queries instead of string concatenation:

// safe

db.query("SELECT * FROM users WHERE email = ?", [email]);

// unsafe

db.query(`SELECT * FROM users WHERE email = '${email}'`);Test your input handling directly. Try:

- Entering scripts like alert <script>alert(1)</script>

- Submitting long or unexpected strings

- Sending invalid formats through API tools like Postman

The system should reject the input or safely escape it. It should never execute code or return unexpected data.

If your app includes file uploads, restrict file types and sizes on the server. Do not rely on client-side checks.

Finally, apply the same validation and sanitization rules to every input source. This includes forms, API requests, URL parameters, and file uploads. Do not assume any input is safe just because it comes from your frontend.

5. Monitor dependencies continuously

Track every dependency in your project and check it regularly for known vulnerabilities.

Start by running a dependency scan locally. Use built-in tools like:

npm audit

or

pip-audit

This shows known vulnerabilities in your current dependencies.

Next, automate this in your workflow. Add a dependency scanner such as Dependabot, Snyk, or Aikido to your repository. For example, enable Dependabot in GitHub, so it automatically scans your dependencies and opens pull requests with security fixes.

This removes the need to check manually.

Before installing any package, verify it manually:

- Search the package name on npm or PyPI

- Check the last update date

- Check the number of downloads or GitHub stars

If the package has no activity or looks suspicious, do not install it.

Lock your dependency versions to prevent unexpected changes. Use lockfiles like:

- package-lock.json

- yarn.lock

- requirements.txt

Avoid loose versions like:

"library": "^1.0.0"

Use fixed versions instead:

"library": "1.0.0"

Update dependencies regularly. When a tool flags a vulnerability:

- Run the update (npm update or similar)

- Review what changed

- Test the affected feature

Do not delay updates, because known vulnerabilities are actively exploited.

Remove unused dependencies by checking your project files. If a package is not imported or used anywhere, delete it and run:

npm uninstall package-name

This reduces unnecessary risk.

After every update, test the feature that depends on that package. For example:

- update upload library → test file uploads

- update auth library → test login flow

Finally, verify everything works, and no vulnerabilities remain:

npm audit

The scan should return no critical issues.

6. Limit permissions of AI agents

Give each AI agent only the access it needs to complete its task.

Start by defining the agent’s scope in concrete terms. Write down exactly what it should do, for example: “read support tickets from /tickets API” or “summarize files from /reports folder.” Do not proceed until this scope is clear.

Create restricted credentials instead of using full-access keys. When generating an API key or token, configure permissions to allow only specific actions. For example:

- Allow – GET /tickets

- Block – POST, DELETE, or admin actions

Avoid using root, admin, or full-access credentials.

Limit access to specific resources. In your system or cloud provider:

- Grant access to one folder instead of the whole storage bucket

- Allow one database table instead of the entire database

- Restrict APIs to specific endpoints

Use IAM roles or permission policies to enforce this.

Start with read-only access. Assign permissions that only allow reading data. Then test the agent. Only add write or delete permissions if the task fails without them.

Run the agent in a test environment first. Connect it to staging data instead of production. Verify its behavior before giving it access to real users or systems.

Test permissions by trying to break them. Use the agent or API manually to:

- Access a different folder

- Modify data when it should only read

- Call endpoints outside its scope

Each attempt should fail with an “access denied” or 403 Forbidden response.

Enable logging for all agent actions. Record:

- Which files it accesses

- Which APIs it calls

- What actions it performs

Review logs after running the agent. Confirm it only performs the actions you defined earlier.

If the agent can do more than intended, reduce its permissions and test again.

What tools help secure AI-generated code?

Vibe coding tools help you build applications quickly, but they do not secure them by default. To protect AI-generated code, you need a separate set of tools that fall into three groups: code scanning, dependency scanning, and runtime protection.

Tools like Aikido, Snyk, and CodeAnt cover different parts of this workflow, while CSA’s AI Controls Matrix helps define which controls to apply.

Code scanning (SAST)

Static application security testing, or SAST, scans source code for risky patterns before you ship.

This is useful for catching issues in AI-generated code, such as unsafe input handling, exposed secrets, or weak logic in pull requests and IDEs. Tools in this group include Aikido, CodeAnt, and Snyk Code.

Dependency scanning

Dependency scanners check the third-party packages your code relies on and flag known vulnerabilities. This matters in vibe coding because AI can suggest outdated libraries or even package names that should be verified before installation.

Snyk and Aikido both scan open-source dependencies, and CodeAnt also includes software composition analysis in its platform.

Runtime protection

Some problems only appear under real traffic, not during code review. Runtime protection watches what happens after the code is deployed and helps detect or block live attacks.

Aikido is one example of a platform that extends from code and dependency scanning into runtime threat detection and blocking.

Where CSA fits

CSA is different from the tools above. It is not a scanner. The Cloud Security Alliance’s AI Controls Matrix is a vendor-neutral controls framework for securing AI systems, so it is best used to define your process, review requirements, and security baseline around AI-generated code.

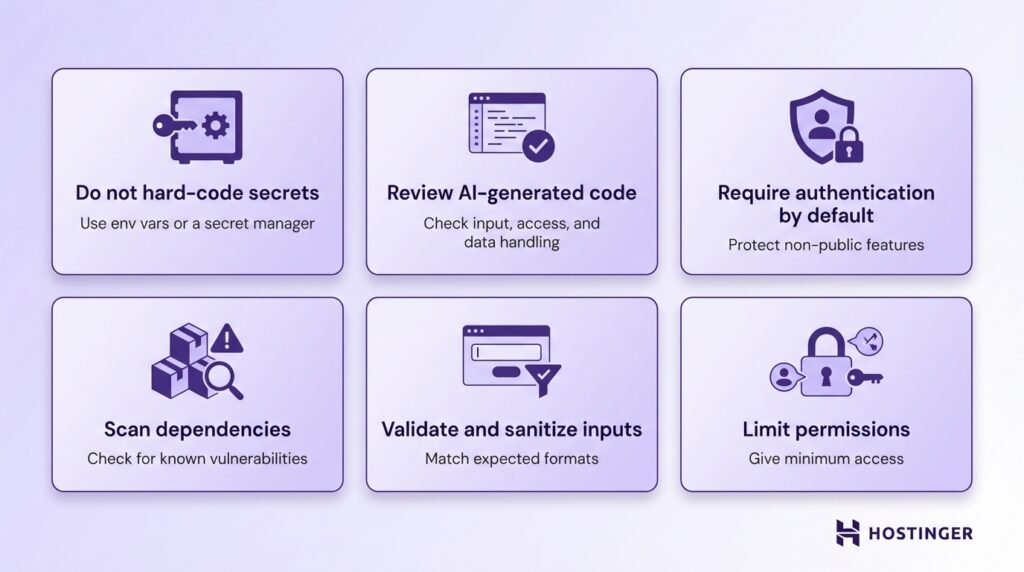

Secure vibe coding checklist

Use this checklist as a quick way to catch the most common risks before code reaches production.

- Do not hard-code secrets – keep API keys, passwords, and tokens out of your code by storing them in environment variables or a secret manager.

- Review all AI-generated code – treat AI output as a draft and check how it handles input, access control, and sensitive data before using it.

- Require authentication by default – make login the standard for any non-public feature, so endpoints are not left open by accident.

- Scan dependencies for known vulnerabilities – check third-party packages regularly to avoid pulling insecure or outdated code into your app.

- Validate and sanitize user inputs – ensure all input matches expected formats and cannot be used to inject harmful code.

- Limit permissions for AI agents and tools – give only the minimum access needed so mistakes or misuse stay contained.

What to know before using vibe coding in production

Vibe coding gives you speed, but it also increases security risk.

AI lets you quickly generate working features, but faster code does not necessarily mean safer code. When more code is produced in less time, it becomes easier to skip reviews and miss security issues.

This changes how you need to think about development. Do not treat AI-generated code as trusted. Treat it as a draft that must be reviewed, tested, and validated before release.

For example, AI can generate a working dashboard in minutes. The pages load and the data appears, but without proper access checks, users may see data they should not. The feature works, but it is not secure.

To use vibe coding safely in production, follow web application security best practices by default. Require authentication, validate input, protect secrets, review dependencies, and limit permissions.

Security also needs to be built into your workflow. Do not rely on manual checks at the end. Add automated scans, enforce reviews, and test edge cases as part of development.

The platform you use also affects how safely you can build and launch AI-generated apps. For instance, Hostinger Horizons vibe coding tool combines prompt-based app building, built-in hosting, and 1-click publishing in a single environment, reducing the setup work that often leads to mistakes during deployment.

It also includes infrastructure-level protections such as a firewall, malware scanning, and DDoS protection, along with features like project version history and integrated backend support for accounts, logins, and data storage.

This helps with launch speed and operational safety, but it does not replace application-level security. You still need to review generated logic, validate inputs, enforce authentication and authorization, protect secrets, and test how the app behaves before publishing it.